Settings

The Settings view allows you to configure various settings globally for the repository and test plan.

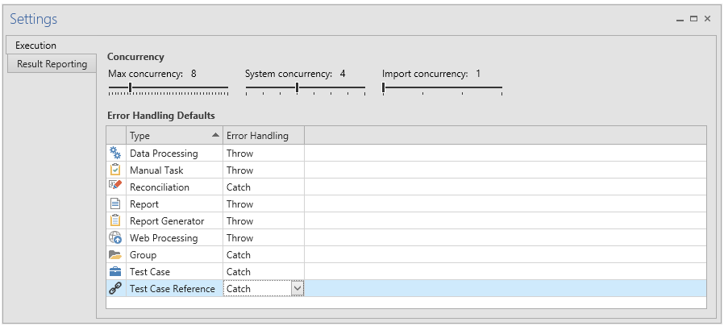

Execution

The Execution tab allows you to configure various settings related to execution and error handling.

Concurrency

In the concurrency section you can configure maximum concurrency in three different pools.

Maximum concurrency defines the maximum number of tasks that can run at the same time, in total. All tasks consume a slot from this pool, and the setting should be made considering memory, processor cores and concurrent users on the desktop where Autotest is running. Reasonable values are 2-10.

System concurrency defines the maximum number of tasks that can be executed on any given system, as defined in Login Aliases. Any task that requires a login uses a slot from this resource pool, e.g. reports and data processing. The setting should be made considering the performance of the application server of the underlying system when reading data. Reasonable values are 2-4.

Import concurrency defines the maximum number of import (Data Processing) tasks that can run concurrently per system. As imports can trigger additional actions in the underlying system they may be much more resource intensive than read operations, and concurrency should be limited. Only Data Processing tasks use a slot from this pool. Reasonable values are 1-2.

Error Handling Defaults

In the Error Handling Defaults section you configure the default behavior for test plan nodes, per node type.

The two options are:

-

Stop - If an error occurs in this node or one of its children, the error is passed up to the nodes parent.

-

Continue - If an error occurs when executing this node or one of it’s children, the error is captured and not propagated to the parent.

For tasks an error is defined as Success Rate < 100%. For groups and error is defined as any error propagated from the group’s immediate children. For a group to receive an error, a child task has to fail and the task has to be configured to Throw.

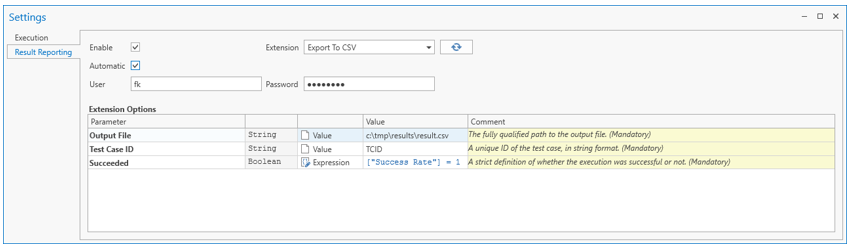

Result Reporting

The Result Reporting tab allows you to configure a result reporting extension. If configured, the result reporting extension is triggered after executing any collateral and allows custom integration and synchronization with e.g. test management systems or audit systems.

Select among available extensions from the Extension drop-down.

The Enable switch toggles the use of the result reporting function on or off globally for the repository. With result reporting enabled, you can configure the extension and Test Plan items for result reporting.

The Automatic switch toggles automatic execution of the result reporting extension on or off. When Automatic result reporting is turned on, the extension will run after each execution. When it is turned off, the extension is never run automatically and must instead be triggered manually.

Parameters in bold are mandatory, others are optional. You can populate a parameter value with a plain value, a variable reference, or an expression.

Plain values are useful for global settings, such as paths or URLs, whereas variable references and expressions are useful to communicate contextual and runtime information such as a test case ID.

Credentials

User and password credentials can be supplied using the corresponding text boxes. Refer to the documentation of the extension you are using to determine whether you need to supply credentials or not.

Expression parameters

Expression parameters use the OmniFi expression language.

Variable references

Variables can be referenced using the variable reference syntax: $Variable Name$.

Execution result values

Execution specific values can be referenced using column reference syntax: [ColumnName].

The following execution result values are available:

Option | Type | Description |

|---|---|---|

Id | int | The DB id of the reported item. |

Type | string | The type of item being reported: |

TestCaseReference | int | The reference id by which the item was executed, or None if the item was executed directly. |

Name | string | The name of the executed item. |

Path | string | The full path of the item. |

LastExecuted | date | The date and time of the execution. |

SuccessRate | double | The rate of success of the execution (0<= n <= 1) |

Duration | double | The duration of the execution in seconds. |

Error | string | Any error encountered during execution. |

ExecutionId | int | The DB ID of the execution log. |

ExecutedBy | string | The user who triggered the execution. |

ExcelLogFile | string | The path to the Excel output file of the execution. Note that this is None for items that are not tasks. Note that the path is relative to the client. If the extension is executed server side, the file will not be accessible. |

DebugLogFile | string | The path to the debug log file (RTF). |

Comment | string | The comment of the executed item. |

Updated about 1 year ago